PORTFOLIO PROJECT

arena

2026

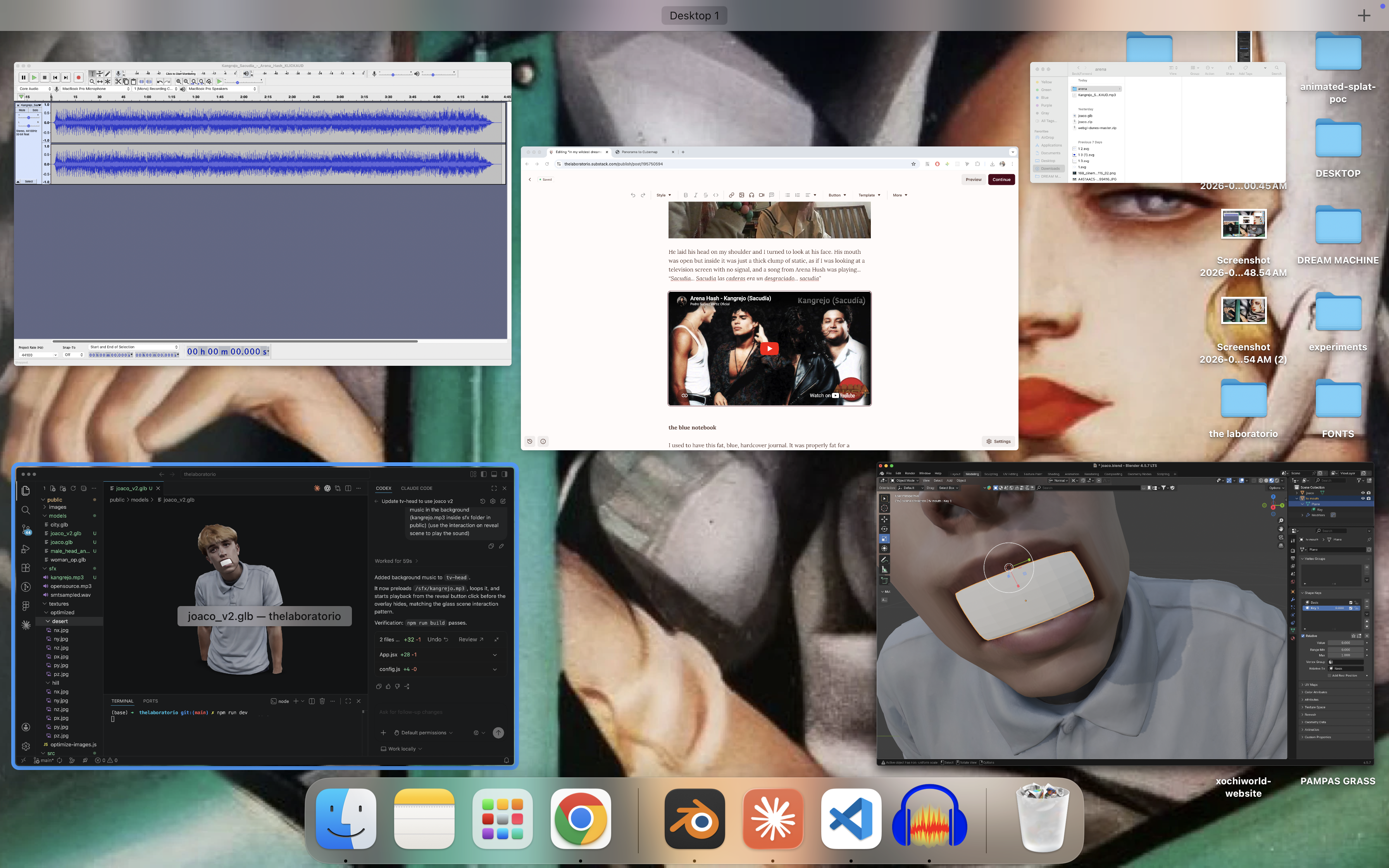

ARENA is an interactive short sequence inspired by a short story I wrote for The Laboratorio. The piece translates the atmosphere of the writing into a cinematic browser-based scene featuring an animated 3D avatar inside a surreal environment, with interaction modes that let the user control the character through either cursor input or face gestures.

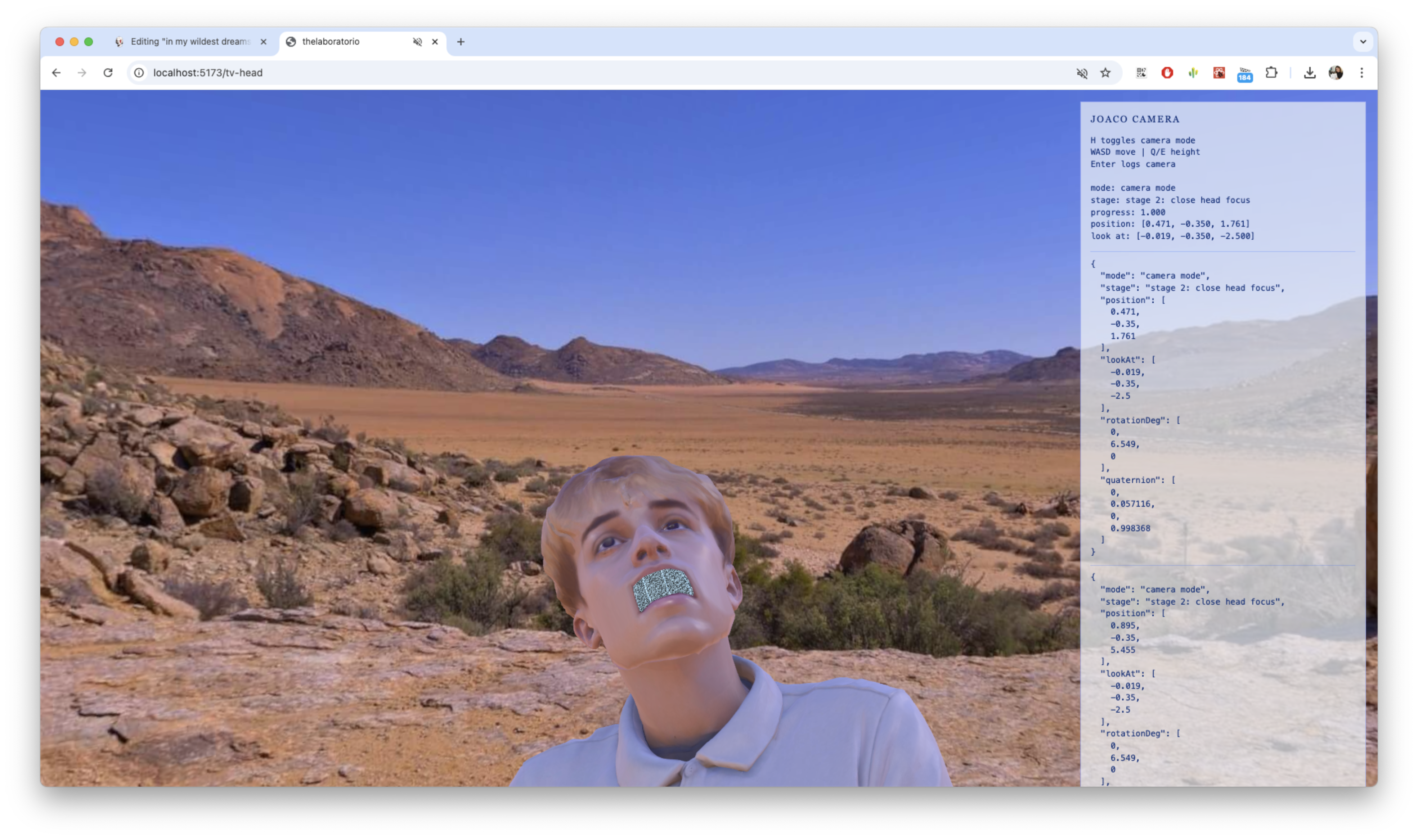

I used Tripo AI and a reference image to generate the initial 3D avatar, then brought the model into Blender to optimise, sculpt, and remodel it for the scene. I also created blend shapes for mouth animation in Blender, allowing the avatar to feel more expressive and alive within the sequence. The environment was built around a customised skybox, with shaders used to distort, enhance, and add visual depth to the scene, including displacement textures generated from diffuse maps.

The scene setup included lighting, camera composition, and visual atmosphere, with GSAP controlling the cinematic camera animation. To direct the camera movement precisely, I used a custom editor and debug mode I built for projects that require camera sequences. It lets me simulate camera transforms, adjust position, target, and rotation values, and log them before translating them into the final GSAP animation sequence.

The experience includes two interaction modes. In cursor mode, hovering over the avatar triggers the mouth animation directly, creating a simple interactive response between the user and the character. In camera mode, MediaPipe face tracking runs locally in the browser to detect the user's mouth gestures and map that movement onto the avatar, letting the character respond directly to the viewer's expression.

LOD and other performance optimisation strategies were applied to keep the experience lightweight and accessible across desktop and mobile.

TECHNICAL DETAILS

Creative direction, 3D modelling, 3D animation, AI-assisted asset generation, asset optimisation, audio editing, React, Vite, React Three Fiber, Three.js, WebGL, GSAP, Blender, Tripo AI, blend shapes, mouth animation, custom skybox, shader distortion, displacement textures, lighting setup, camera choreography, custom camera debug editor, MediaPipe face tracking, LOD optimisation, Vercel deployment.

RESPONSIBILITIES

Creative Direction and Writing: Wrote the short story that inspired the piece and directed the full visual and interactive translation of it.

3D Avatar Pipeline: Generated the initial model with Tripo AI, then optimised, sculpted, and remodelled it in Blender. Created blend shapes for expressive mouth animation.

Environment and Shaders: Built the customised skybox environment and wrote shaders for scene distortion and depth, including displacement textures generated from diffuse maps.

Camera Choreography: Designed and implemented the cinematic camera sequence using GSAP, with a custom camera debug editor for precise transform control and logging.

Interaction Systems: Built cursor mode (hover-triggered mouth animation) and camera mode (MediaPipe face tracking for real-time gesture-to-avatar mapping).

Performance Optimisation: Applied LOD and asset optimisation strategies for smooth performance across desktop and mobile.

Deployment: Built and deployed on Vercel.